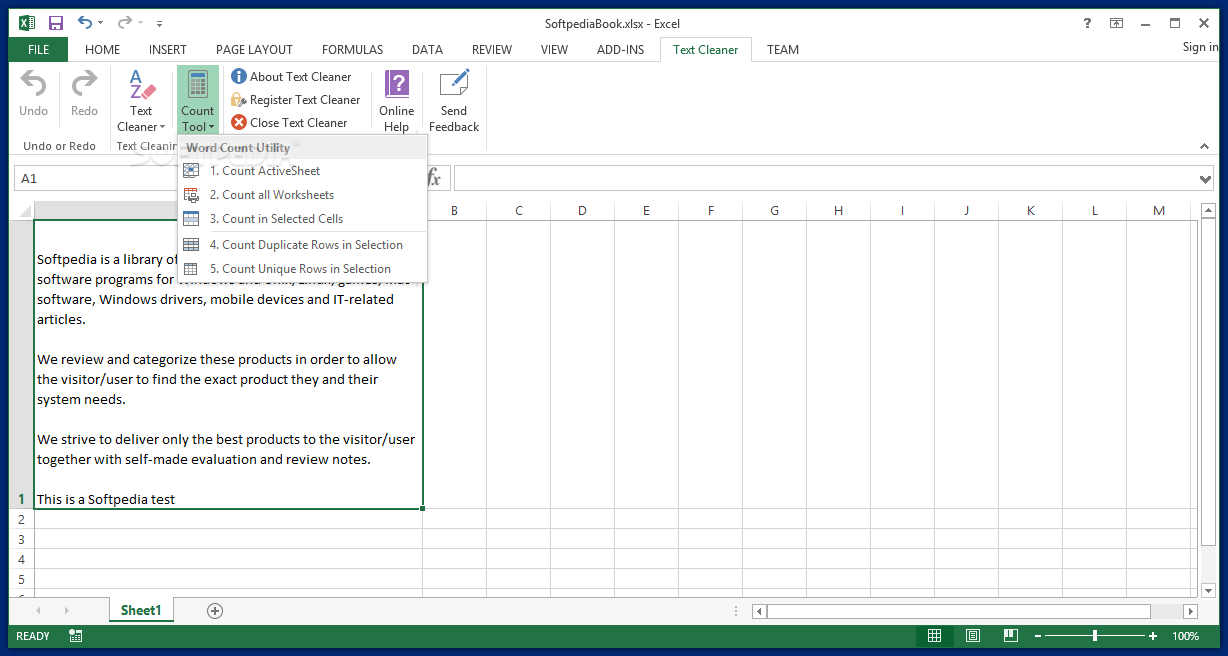

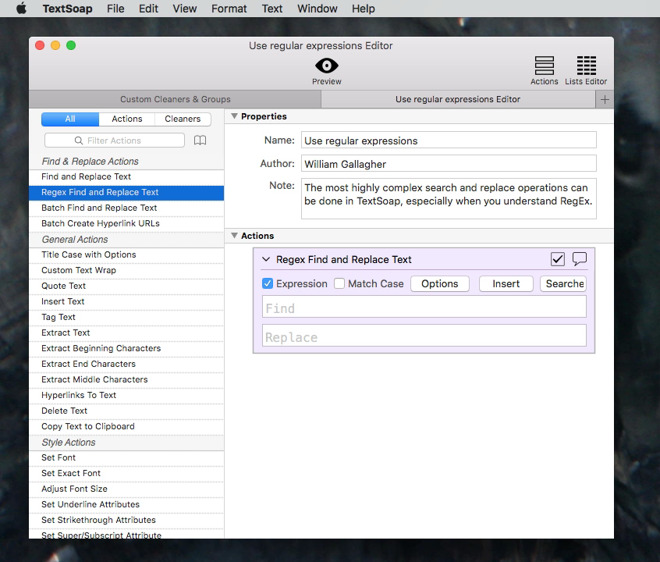

The text is a list of tokens, and a regexp pattern to matchĪ single token must be surrounded by angle brackets. findall ( regexp ) ¶įind instances of the regular expression in the text. Makes the random sampling part of generation reproducible. Random_seed ( int) – A random seed or an instance of random.Random. Text_seed ( list ( str )) – Generation can be conditioned on preceding context. Task Specific entails: Manual Tokenization Tokenization and Cleaning with NLTK Additional Text Cleaning Considerations Tips for Cleaning Text for Word Embedding More to follow. Length ( int) – The length of text to generate (default=100) NLTK-Data-Cleaning Text cleaning data source is the book Metamorphosis by Franz Kafka which is available for free from Project Gutenberg. Print random text, generated using a trigram language model. _plot() generate ( length = 100, text_seed = None, random_seed = 42 ) ¶ Starting with a list of reviews: Divide review into sentences clean words (remove punctuation and extra characters) tokenize. Text Data Cleaning - tweets analysis Python Private Datasource Text Data Cleaning - tweets analysis Notebook Input Output Logs Comments (10) Run 38.6 s history Version 9 of 9 License This Notebook has been released under the Apache 2.0 open source license. Words ( list ( str )) – The words to be plotted Seealso Produce a plot showing the distribution of the words through the text. The main goal of cleaning text is to reduce the noise in the dataset while still retaining as much relevant information as possible. Noise in text comes in several forms, such as emojis, punctuations, different cases, and more. Words ( str) – The words used to seed the similarity searchĬmon_contexts() dispersion_plot ( words ) ¶ Text preprocessing consists of a series of techniques aimed to prepare text for natural language processing (NLP) tasks. Num ( int) – The number of words to generate (default=20)ĬontextIndex.similar_words() common_contexts ( words, num = 20 ) ¶įind contexts where the specified words appear list Word ( str) – The word used to seed the similarity search Same contexts as the specified word list most similar words first. readability ( method ) ¶ similar ( word, num = 20 ) ¶ĭistributional similarity: find other words which appear in the index ( word ) ¶įind the index of the first occurrence of the word in the text. Functionality includes: concordancing, collocation discovery, regular expression search over tokenized strings, and distributional similarity. I advice you to create a variable for an easier use of tempdf.loc:, 'text' Deleting stopwords in a sentence is described here (Stopword removal with NLTK): cleanwordlist i for i in sentence.lower(). nltk.text module This module brings together a variety of NLTK functionality for text analysis, and provides simple, interactive interfaces. the 're' package provides good solutions to use regex. Window_size ( int) – The number of tokens spanned by a collocation (default=2)Ĭount the number of times this word appears in the text. nltk provides a TweetTokenizer to clean the tweets. Its methods perform a variety of analyses on the text’s contexts (e.g., counting, concordancing, collocation discovery), and display the results. Num ( int) – The maximum number of collocations to print. A wrapper around a sequence of simple (string) tokens, which is intended to support initial exploration of texts (via the interactive console). collocations () United States fellow citizens years ago four years Federal Government General Government American people Vice President God bless Chief Justice one another fellow Americans Old World Almighty God Fellow citizens Chief Magistrate every citizen Indian tribes public debt foreign nations Parameters Return collocations derived from the text, ignoring stopwords. Generate a concordance for word with the specified context window.ĬoncordanceIndex collocation_list ( num = 20, window_size = 2 ) ¶ Lines ( int) – The number of lines to display (default=25)ĬoncordanceIndex concordance_list ( word, width = 79, lines = 25 ) ¶ Width ( int) – The width of each line, in characters (default=80) Word ( str or list) – The target word or phrase (a list of strings)

Prints a concordance for word with the specified context window. concordance ( word, width = 79, lines = 25 ) ¶ Tokens ( sequence of str) – The source text. words ( 'melville-moby_dick.txt' )) _init_ ( tokens, name = None ) ¶ Non_entities.> import rpus > from nltk.text import Text > moby = Text ( nltk. Tokens = for leaf in tree if type(leaf) != nltk.Tree] UPDATE: I tried switching to batch version with this code, but it's only slightly faster.

To use the code I typically have a text list and call the ne_removal function through a list comprehension. Tokens = for leaf in chunked if type(leaf) != nltk.Tree] Return("".join().strip())Ĭhunked = nltk.ne_chunk(nltk.pos_tag(tokens)) Does anyone have a suggestion for how to optimize this to make it run faster? import nltk The problem I'm having is that my method is very slow, especially for large amounts of data.

I wrote a couple of user defined functions to remove named entities (using NLTK) in Python from a list of text sentences/paragraphs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed